- Google Cloud

- :

- Articles & Information

- :

- Cloud Product Articles

- :

- Apigee Southbound Connectivity Patterns

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Credits

This article is co-authored by @Florian Geiger and @Daniel Strebel.

Thanks to @ozanseymen and @davissean for reviewing drafts of this article.

High-Level Goal

One of the most fundamental requirements of an API platform is to provide a secure and reliable way for connecting to specific target services. This form of connectivity is generally referred to as southbound connectivity. From a networking and security perspective, we can summarize the following objectives for southbound connectivities regardless of the Apigee deployment model and location of the target services:

- Connectivity between the Apigee runtime and the target services

The target services need to be reachable from the Apigee runtime. As described later in this article, the specific networking requirements for how to achieve this depend on the Apigee deployment model as well as the location of the target services. - Limit sources of incoming traffic to Apigee runtime instances

In accordance with security best practices and the defence in depth model, many customers limit inbound traffic by explicitly configuring the IP ranges which are permitted to connect to their target services. This way, they ensure that the incoming traffic originates from the Apigee network. - Authentication and Encryption

On top of the network-level filtering of incoming traffic, it is also recommended to enforce a service level authentication. Leveraging a PKI architecture for mTLS allows the backend to identify the specific Apigee runtimes and exchange encrypted traffic.Depending on the target service, additional means of application-level identification, authentication or authorization such as API keys or OAuth2 tokens might additionally be required.

Foundations

To fully understand the architecture patterns in the next section, we have a look at the three principles used to make a secure and robust connection between Apigee and a target service, irrespective of where that target service is deployed.

IP Allow List (on firewall)

The first level of security is to make sure that only traffic coming from Apigee IPs is accepted by the backend. This is done by an IP Allow List on the backend-side with the Apigee IPs configured. Apigee customers can request a fixed set of IPs for this.

Since these IPs might be shared between Apigee SaaS customers or spoofed intentionally, trusting the source IP alone is not a sufficient security mechanism. We therefore recommend adding additional layers of security.

mTLS

The TLS in mTLS (Transport Layer Security, whose predecessor is SSL) is the standard security protocol designed to provide communications security over a computer network. It primarily aims to provide privacy and data integrity between two or more communicating applications, such as a client (like a web browser or Apigee runtime) and a server (like target APIs)

Since Apigee and many other SaaS services do not support VPN tunneling or VPC peering, a mutual TLS connection is the most secure option to establish a connection to the target services. More on this can be found in the Apigee FAQ.

The advantage of implementing TLS is to enhance security end-to-end and protect your data from client attacks such as client spoofing or man-in-the middle attacks.

mTLS is also known as Mutual Authentication, two-way authentication or 2-way TLS. It specifies how two parties authenticate each other via the exchange of PKI certificates. Trust is based by either explicitly trusting the certificate itself or a signing entity along its certification path. This inheritance of trust is also the reason why certificates which are signed by a public Certificate Authority (CA) should not be used for client authentication. When using a trusted CA signed certificate, any certificate with the CA in its certification chain would be trusted. Hence clients should use certificates which are signed by an internal CA only and the CA certificate should be used on the server side to validate the client certificate.

While TLS protocol only proves the identity of the server to the client using X509. The authentication of the client to the server is generally left to the application layer. mTLS fills this gap by attaching a security identity to the clients through certificates which can then be used by the server for authentication before establishing the secure communication.

See here for more information on TLS/SSL with Apigee and the Apigee DevRel repository for a simple reference implementation.

@Florian Geiger also recorded a step-by-step video tutorial on how to enable mTLS from within the Apigee UI.

App Level Authentication

In addition to securing the network connection, it is recommended to also implement security at the application level to achieve defense in depth. There are multiple ways to achieve this. Starting from “basic” means with Basic Authentication to more advanced methods like WS-Sec, OAuth2, OIDC and JWT based authentications.

Achieving this depends on the target service’s capability to validate some sort of authentication. This third level of security might therefore not be available to all services in an enterprise.

High level architecture / Patterns

The actual technical components required to fulfill the requirements stated above depend on both the Apigee deployment model as well as the characteristics of the target service and the available networking infrastructure.

Exposure of Target Services

The first step in making existing services available as targets in Apigee is to ensure connectivity. For a public cloud deployment this means that the target service needs to be reachable from the public internet. A network peering or VPN connection using private IPs is not supported in a public cloud deployment. For hybrid and private cloud deployment options the backend needs to be publicly exposed, only if the backend and the Apigee runtime reside on disjoint networks with no available private routing path between them.

The backend service or backend pool will be identified by one or more FQDN and serve a valid certificate for TLS. Depending on the target infrastructure this can be achieved by the same infrastructure used to handle mTLS and IP allow listing or by a managed cloud service like an External Load Balancer.

Authentication of Traffic from Apigee

Once we enable Apigee to reach the target service, we need to ensure that the target system can identify incoming traffic originating from Apigee. This can be achieved by a layered security model which combines the identification of the network endpoints of the Apigee infrastructure via IPs with the service-level authentication through PKI client certificates.

Because both aspects rely on some information which is provided by Apigee, they are best served by an intermediate reverse proxy rather than being hardcoded in the target service. This ensures that the target service is loosely coupled to the Apigee configuration and limits the number of configuration points in a scenario with a large number of target services. As for the reverse proxy, we can choose from a large number of enterprise and open source products such as Envoy, Nginx, F5, HA Proxy and many more.

From a design perspective, it is worth noting that since this component is on the critical path for every call between Apigee and the target service and should, therefore, be highly available and horizontally scalable. Depending on the availability of load balancers, backend pools or container orchestration tools, this can be achieved in different ways. To perform both mTLS and IP allow listing it is required that all intermediary networking components such as load balancers transparently forward the original Apigee IP as well as the client certificate.

Application-Level Authentication

Additional application-level authentication might be required depending on the target service. For example, a target service could require a simple Basic Authentication or a valid OAuth2 access token as part of its own security mechanism. These application-level security protocols would have to be built in addition to the previously described safeguards.

Implementation Examples

To wrap up, we want to look at two specific recommendations for how to implement the aforementioned requirements. The examples are chosen for two different scenarios. The first scenario is based on a hybrid cloud approach where the Apigee API platform is running as a SaaS or hybrid deployment model and the target services are deployed on premise. The second scenario illustrates a public cloud architecture where the Apigee hybrid runtime is co-located with the target services in the same public cloud.

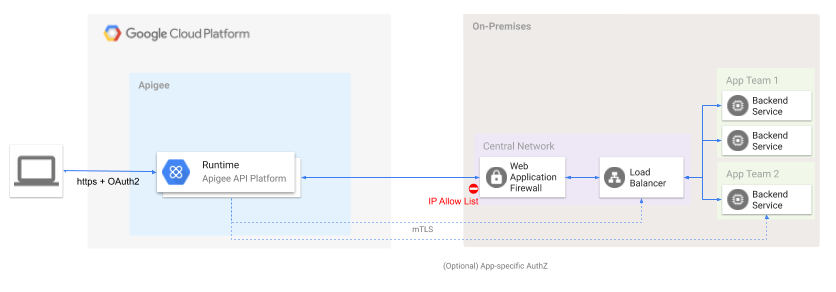

Scenario 1 - Apigee SaaS or hybrid to On-Premise Service

In this scenario Apigee is connecting to an on-premise backend pool. The pattern described here is applicable to both the hybrid as well as the SaaS deployment option. The on-premise backends are not tightly coupled to the Apigee configuration. The security checks are performed by two logical network appliances. In a first step a firewall is performing the IP allow listing to compare the source IP of the incoming traffic against its configuration. Subsequently a load balancer is used to validate the client certificate and to terminate the TLS connection. If both checks passed successfully, the call forwarded to the respective target service.

In this example we have chosen an on-premises backend network. In the context of this architecture the same pattern can also be applied to backends in any public cloud environment. Depending on the target environment, the WAF and Load Balancer components would potentially be replaced with suitable cloud networking and security components.

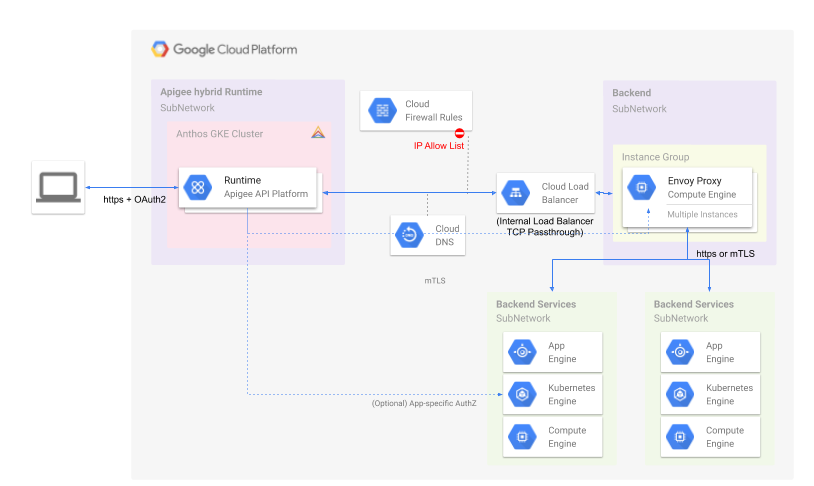

Scenario 2 - Apigee hybrid to GCP

In our second scenario both the Apigee hybrid runtime and the target services reside within the Google Cloud Platform. The Apigee runtime is hosted within its own Anthos cluster in a dedicated network subnet. The firewall rules are configured to allow mTLS traffic from the hybrid cluster to the internal cloud load balancer. The cloud load balancer operates in a TCP passthrough mode and transparently forwards the client certificate to a managed instance group of reverse proxies. The proxies are used to validate the client certificate and forward the traffic to the appropriate target service within the same network or another network with a routing path.

In case the target services are running within a Google Kubernetes Engine (GKE) cluster with Anthos Service Mesh (ASM) or istio configured, the extra pool of reverse proxies is not required because the ingress gateway of the service mesh can be used directly for mTLS handshake and to forward traffic to the respective service instances.

Configuration Instructions

As described in the architecture patterns above, there are many different combinations, which yield a suitable southbound connectivity configuration. The following section provides a number of links and configuration snippets to get you started:

IP allow listing for Apigee SaaS with a third party network proxy

- Obtain the list of Apigee southbound IPs by following the instructions on the Apigee docs page here.

- Configure your network proxy according to the product specification (in addition to your edge firewall configuration)

mTLS with self signed client cert

- Create a self signed certificate to be used by Apigee as a client certificate:

export LC_CTYPE=C # Set the name of the certificate (recommended: one per Apigee env) CERT_NAME=apigee-test # Create a private Certificate Authority (CA) head /dev/urandom | tr -dc A-Za-z0-9 | head -c 24 > ./certs/$CERT_NAME/$CERT_NAME-CA.pwd openssl genrsa -des3 -out ./certs/$CERT_NAME/$CERT_NAME-CA.key -passout file:./certs/$CERT_NAME/$CERT_NAME-CA.pwd 4096 openssl req -x509 -new -nodes -key ./certs/$CERT_NAME/$CERT_NAME-CA.key -sha256 -days 1825 \ -passin file:./certs/$CERT_NAME/$CERT_NAME-CA.pwd \ -out ./certs/$CERT_NAME/$CERT_NAME-CA.crt -subj "/CN=$CERT_NAME-CA.demo.org" # Create a Client Cert (signed by the private CA) openssl genrsa -out ./certs/$CERT_NAME/$CERT_NAME.key 4096 openssl req -new -key ./certs/$CERT_NAME/$CERT_NAME.key -out ./certs/$CERT_NAME/$CERT_NAME.csr -subj "/CN=$CERT_NAME.demo.org" openssl x509 -req -in ./certs/$CERT_NAME/$CERT_NAME.csr \ -CA ./certs/$CERT_NAME/$CERT_NAME-CA.crt -CAkey ./certs/$CERT_NAME/$CERT_NAME-CA.key -CAcreateserial \ -out ./certs/$CERT_NAME/$CERT_NAME.crt -days 365 -sha256 \ -passin file:./certs/$CERT_NAME/$CERT_NAME-CA.pwd rm ./certs/$CERT_NAME/$CERT_NAME.csr

- Setting up a Keystore and TargetServer according to the Apigee docs. Add the Certificate `certs/$CERT_NAME/$CERT_NAME.crt` and the private key `certs/$CERT_NAME/$CERT_NAME.key` to the truststore.

- Use the CA certificate `certs/apigee-test/apigee-test-CA.crt` to configure the client authentication on your backend proxy:

Operations Scenarios

As part of your configuration you should also consider the following scenarios and come up with a suitable operations plan for each of them:

- Expired certificates - It is good practice to have certificates expire regularly and renew them in the Apigee keystore as described here. Rotating certificates is ideally done in an automated fashion such that southbound connectivity can continue without disrupting traffic. For the necessary configuration please check the Apigee management API.

- Revoked certificates - For certificates which have been compromised you should come up with a plan for how to implement certificate revocation.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Great article. But I keep wondering why Google/Apigee do not support some gateway/agent/connector that connects from on-prem to the cloud and then allows bi-directional communication. Following the "reverse invoke" approach. SAP has its SAP Cloud Connector, IBM has its Secure Gateway Client. For less advanced customers, such gateway/agent/connector is an easy and fine solution.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

You're right. This pattern is currently not supported. If you're worried about inbound connectivity from the public internet you might want to check out Apigee hybrid and move your runtime in close proximity of your target services.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@guycrets are you looking for something like Interconnect?

https://cloud.google.com/network-connectivity/docs/interconnect

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

No, rather something like the https://code.google.com/archive/p/google-secure-data-connector/ that Google once supported in combination with AppEngine.

PS: unclear to me if Interconnect can be used with Apigee Edge SAAS.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

There is a pattern that allows Apigee Edge SaaS connectivity to on-prem backends via Interconnect. It requires setting up your VPC in GCP (including network peering to on-prem) and deploy a reverse proxy there (eg: Ngnix, Squid) that forwards all incoming requests from Apigee SaaS into the on-prem backend servers via Interconnect.

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Found an interesting SNI Proxy project https://github.com/dlundquist/sniproxy

That could be an interesting connection point into private networks (where only one IP needs to be exposed to the public for Apigee SaaS)

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Apigee Edge SaaS (current generation) does not support Interconnect.

See docs: https://docs.apigee.com/api-platform/faq/edge-connectivity

Twitter

Twitter