- Google Cloud Security

- Articles & Information

- Community Blog

- Implementing a Modern Detection Engineering Workfl...

Implementing a Modern Detection Engineering Workflow (Part 3)

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Welcome to the final post of my blog series where I’m demonstrating how to implement a Detection Engineering workflow that uses Detection-as-Code to manage rules in Chronicle. In part one, I talked about required tooling, setting up your GitHub repository, utilizing a detection framework, and setting up a detection lab. I also walked through how to use SSDT for detection testing and wrote an initial UDM search query to match on the events related to the attack technique of interest, Kerberoasting. In part two I covered synchronizing rules between Chronicle and GitHub, staging the new detection idea, writing the rule itself, and finally validation & testing.

In this post I’ll cover:

- Proposing changes to detection content

- Deploying rule updates to Chronicle via automation tooling

- Executing tests to trigger a rule and validate alert generation

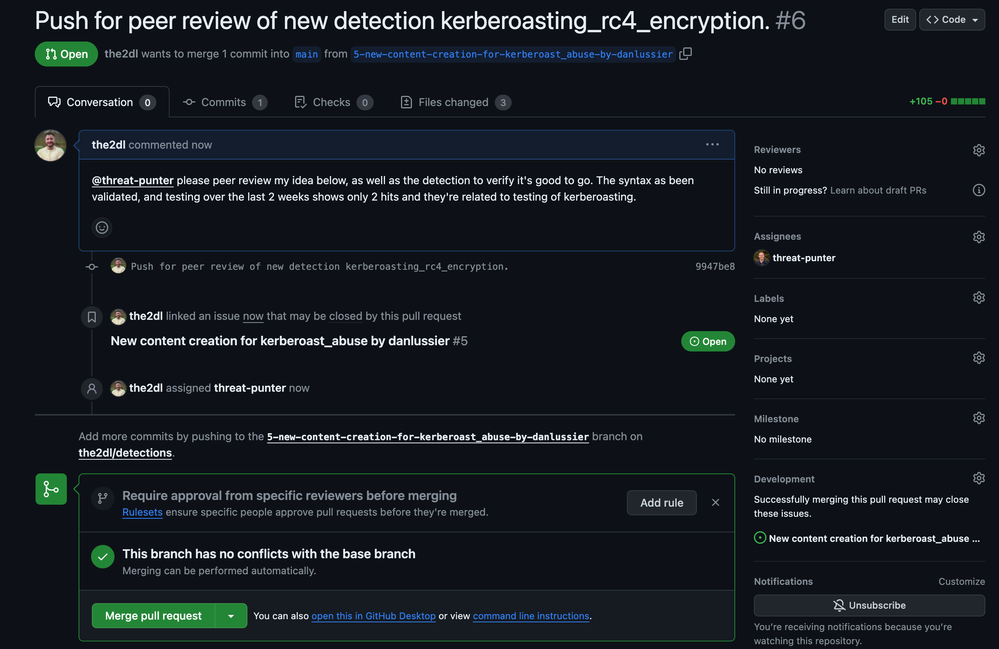

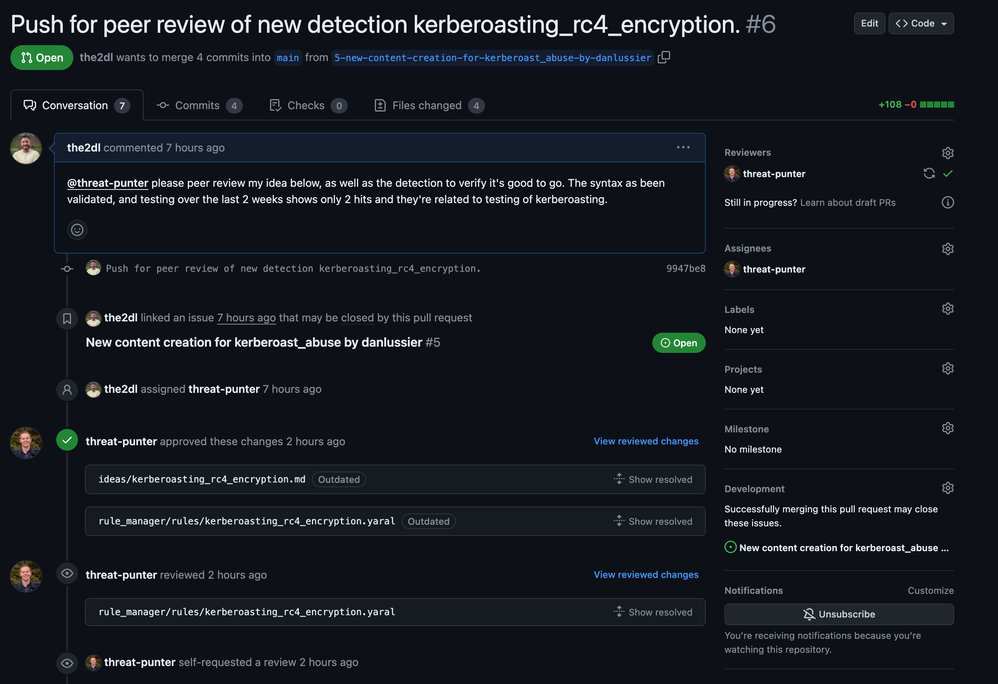

Proposing Changes to Detection Content

Our next step is to create a pull request in GitHub that contains our proposed changes to rules in Chronicle. I’ve pushed my changes to the branch I created in my GitHub repository in part two. Let’s create a pull request and kick off the review process where members of the security team discuss and collaborate on changes to detection content in their security tools.

After the pull request is reviewed & approved, the Detection Engineer gets to merge their changes into the main branch of the GitLab project. Once those changes are merged, my GitHub Actions workflow is triggered and deploys the rule changes to Chronicle. We’ll take a look at the GitHub Actions workflow in the next section of this post.

Deploying Rule Updates to Chronicle

Below is an example GitHub Actions workflow file that can be used to deploy rules and reference lists to Chronicle. After changes are deployed to Chronicle, the last four steps in this workflow take care of pulling the latest version of all rules from Chronicle and committing them to the main branch of the GitHub repository. This is done to keep the codebase in GitHub in sync with the rule data that’s in Chronicle such as rule UUIDs, revision_ids, revision_create_times, etc.

name: Deploy Rules and Lists to Chronicle

on:

push:

branches:

# Deploy rules to Chronicle when changes are pushed to the main branch

- "main"

# workflow_dispatch allows you to run this workflow manually from the Actions tab

workflow_dispatch:

permissions:

contents: write

jobs:

build-and-execute:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Set up Python

uses: actions/setup-python@v4

with:

python-version: '3.11'

cache: "pip" # cache pip dependencies to speed up workflow run

- name: Install dependencies

run: pip install -r rule_manager/requirements.txt

- name: Get environment built

run: echo ${{ secrets.MAIN_ENV }} | base64 --decode > .env

- name: Push new rule

run: python -m rule_manager.rule_cli update-remote-rules --skip-archived

- name: Push updated lists

run: python -m rule_manager.rule_cli --update-remote-reference-lists

# After any changes are made to rules and reference lists in Chronicle, the latest rules and reference lists are retrieved to update the main branch with the latest data (revision_id, revision_create_time, etc).

- name: Add files to git staging area

run: git add rule_manager/rules rule_manager/rule_config.yaml rule_manager/reference_lists rule_manager/reference_list_config.yaml

- name: Run git status command

run: git status # For debugging

- name: Set the username and email to be associated with git commits

run: |

git config --global user.email ${{ secrets.GH_USER_EMAIL }}

git config --global user.name ${{ secrets.GH_USER_NAME }}

- name: Commit pending changes

run: |

# Check if there are uncommitted changes

if [[ -n $(git status --porcelain) ]]; then

git add .

git commit -m "latest content pulled from chronicle api"

git push

else

echo "No changes to commit"

fi

- name: Show last git commit message

run: git log -1 # For debugging

Make sure to add the GitHub secrets as outlined in the workflow and adjust paths as needed. For the secrets.MAIN_ENV make sure to base64 encode your environment file like this, "cat .env | base64 -w 0 > env.txt" then take the output in env.txt and use it in your secret.

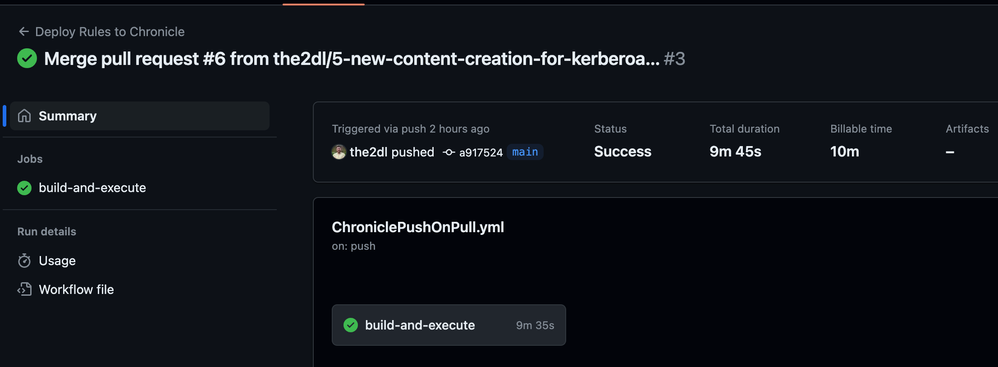

Let’s submit our pull request and observe the GitHub Actions workflow take care of creating and configuring the rule in Chronicle.

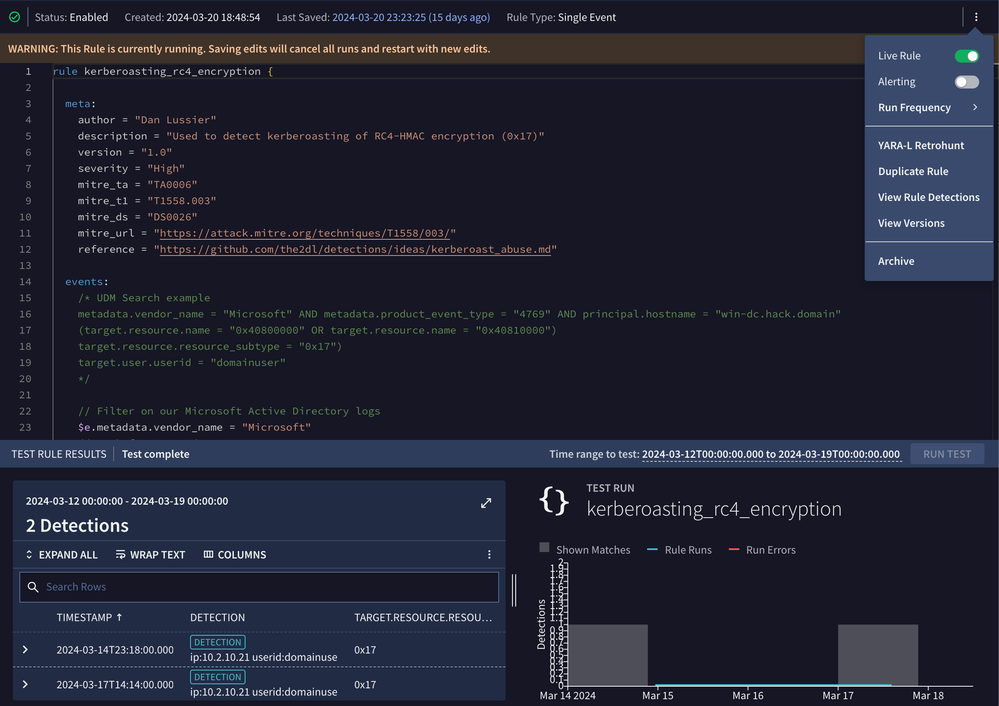

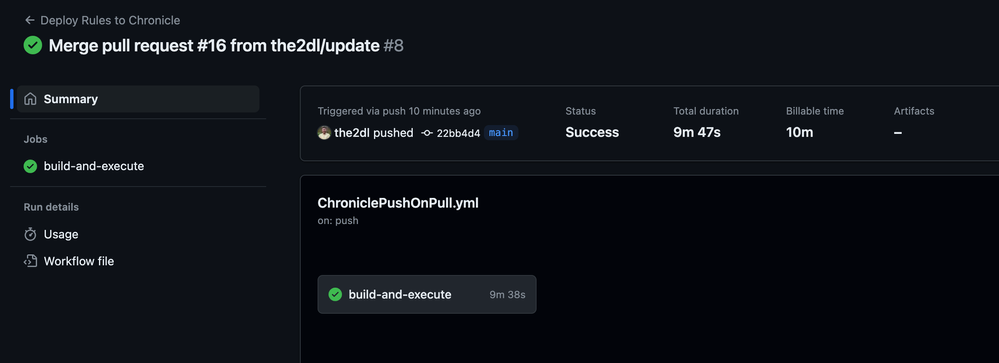

Our GitHub Actions workflow completed successfully. Let’s validate in the Chronicle UI that the rule is there and in the proper status. In addition, we’ll test the rule in the UI to make sure it matches what we saw earlier when we tested the rule via Chronicle’s API.

We can see the detection is Live and has two detections from previous testing.

Triggering the New Rule Using SSDT

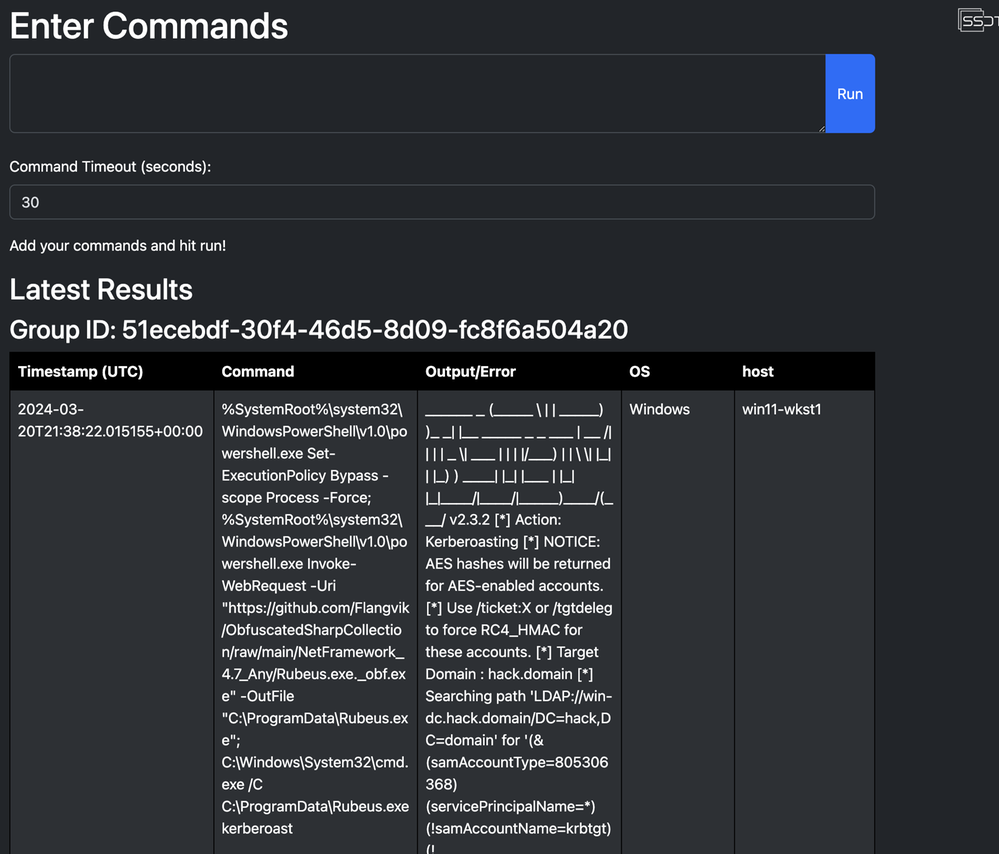

For completeness, let’s execute the Kerberoasting technique again using SSDT to trigger our new detection and validate that an alert fires. I’ve gone ahead and enabled alerting on the rule prior to testing to validate a case fires into our SOAR.

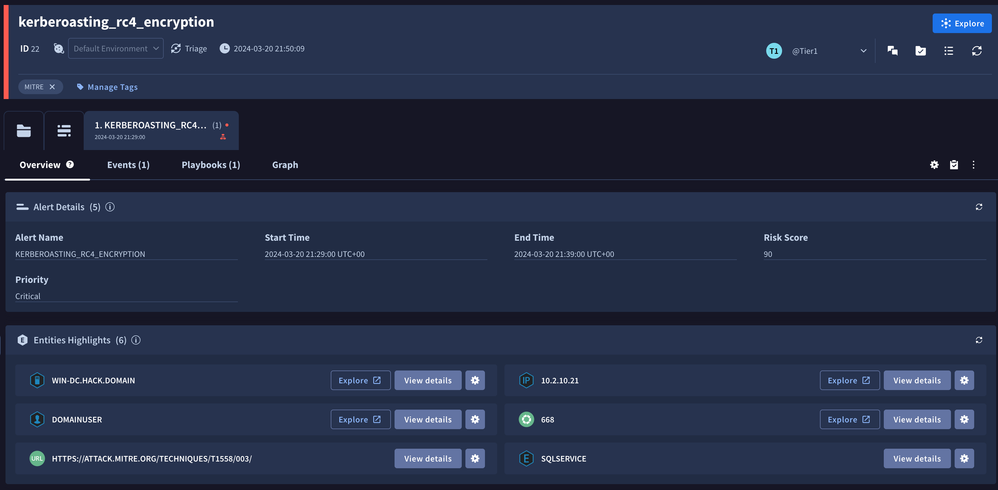

After testing our rule again, we can see an alert fired and can quickly utilize Chronicle SOAR to identify involved entities, domainuser is the attacker, and sqlservice is the service account that is potentially compromised.

Rule Tuning with the Help of Reference Lists

After our initial tuning we did when developing the detection, there may be a need to make some adjustments because something has gotten noisy with the detection in the 7–14 day burn in period. I went ahead and re-created a new issue with our detection template from earlier to work from.

First, let’s use the rule manager tool to pull the latest rules from Chronicle as we’ll want to make sure we’re up to date with any changes made by other engineers: "python3 -m rule_manager.rule_cli pull-latest-rules --skip-archived" and let’s open up the kerberoasting_rc4_encryption.yaral detection in our IDE.

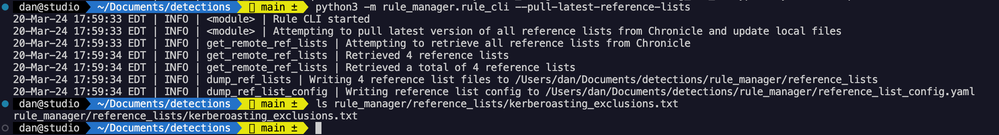

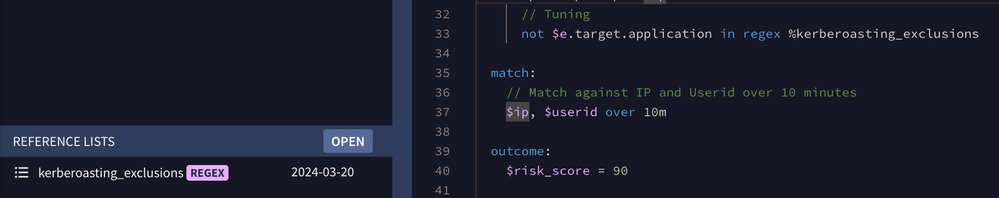

I want to utilize a list I created in the Chronicle UI for Kerberoasting exclusions, that list name is kerberoasting_exclusions. Let’s pull all the current lists so we have the latest version and update the Kerberoasting one.

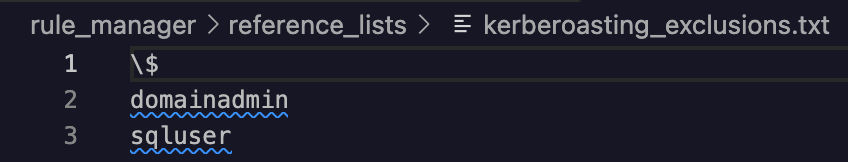

Now let’s open that kerberoasting_exclusions.txt file up and add in our exclusion. The syntax type for this reference list is “RegEx” i.e. this list contains a collection of RegEx patterns to leverage in our YARA-L rule.

I updated the list to include the target.application UDM field of domainadmin and sqluser the \$ was already in the exclusion list. Save your updates to this file and let’s move on to review the detection logic for the YARA-L rule.

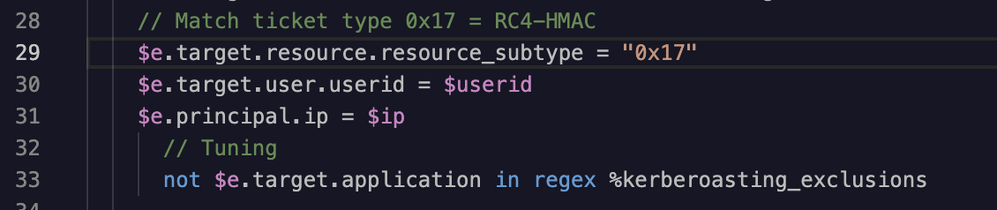

Next, I add the following lines to the bottom of the events section in the YARA-L rule.

Finally, we follow almost the same workflow that we used earlier when testing and creating a new rule, but this time we’re modifying an existing rule for tuning purposes:

- Validate the syntax of the YARA-L rule

- Run the rule over a 48-hour test window and review any detections that are returned

- Create a new pull request containing the proposed changes

- Obtain peer review & approval for the staged changes

- Submit the pull request and validate that changes were deployed to Chronicle successfully

Note, be sure to run the rule manager tool’s verify-rule command whenever you make updates to your rules. I had a typo in Kerberoasting where I forgot the t and couldn’t figure out why my pull requests were failing. Always double check the detection logic!

Wrap up

This three-part series provides valuable insights and practical guidance for organizations who are interested in implementing a modern Detection Engineering workflow, adopting Detection-as-Code to develop and manage detection content in Chronicle, and how to use free tools to test & validate their monitoring and detection capabilities.

As a reminder, you can find tools, example code, workflows, and more used throughout this project here. These resources are available to the community to help fellow security teams with their Detection Engineering journey. I encourage you to hack away at them and make them fit the needs of your environment.

While this methodology may not be universally applicable, certain components, such as how to test and validate detection rules, managing rules or lists from a repository using software development practices and CI/CD tools for automation, could prove beneficial.

It is important to consider the unique needs and requirements of your organization and determine if this approach is worthwhile. Ultimately, Chronicle offers an exceptional detection management platform within its native user interface, so if Detection-as-Code does not align with your specific requirements, utilizing the Chronicle detection engine in the user interface remains a great option!

- Scaling Detection-as-Code with Google SecOps: An MSSP’s Perspective

- Behind the Binary: EP10 Tim Blazytko - Protecting Intellectual Property

- Leveraging Data Tables for Detection Engineering in Google SecOps

- EP228 SIEM in 2025: Still Hard? Reimagining Detection at Cloud Scale and with More Pipelines

- Navigating Cloud Misconfigurations: Why It's a Top Risk and How to Mitigate It

Twitter

Twitter